- Blog

- New nepali lok geet 2018

- Daz studio genesis 3 female lip biting pose

- Red dead redemption 2 online update

- Dsp 0501 controller

- Julyjailbait porn

- Malena movie 2000 full

- Export realflow to cinema 4d

- F e olds ambassador cornet

- Gmail desktop calendar

- Samsung gear fit manager pc app download

- Arturia spark le manual

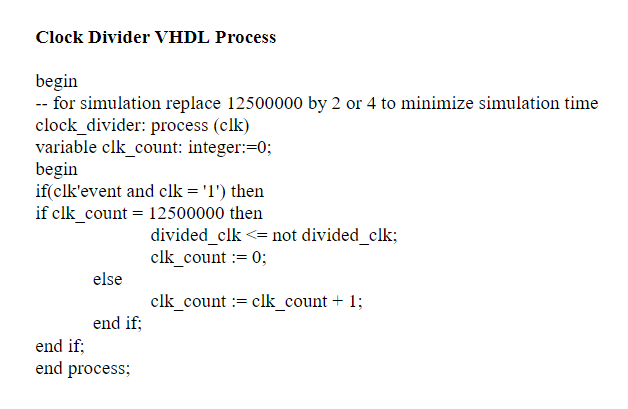

- Intel fpga simulation

- Gom player osx

- Juego de the king of fighters 2002 magic plus 2

- Boa vs python temperament

“I was young and eager to prove myself in my newly chosen field. Looking to make the most of the technology he got fab ready, he applied for a job at Intel in April 1970. Frustrated by the slow adoption of SGT at Fairchild, which had been crippled by the departure of Moore, Noyce and others, Faggin decided to jump ship as well. A year later, in 1969, their company launched an SGT MOS product, a 256 bit RAM. Soon after Faggin’s SGT breakthrough, Gordon Moore and Bob Noyce left Fairchild and started Intel. It allowed integration of about twice the number of random-logic transistors on the same chip size, achieving five to ten times the speed of the incumbent technology for the same power dissipation. Supplemented by two additional innovations, chips featuring silicon-gate (SGT) technology proved far superior to their aluminum-gate predecessors. Unfortunately, the aluminum used to make the gate electrode wasn’t compatible with that process, since this metal can’t withstand the high temperatures required to form the source and drain junctions.īuilding on research at Bell Labs, Faggin managed to replace aluminum with polysilicon, opening the door to self-aligned gates. This could be avoided by forming the gate first and using it to define the source and drain regions, resulting in perfect alignment all the time. In fact, Faggin focused on what proved to be an essential ingredient to make MOS-based microprocessors competitive.Įarly MOS transistors were plagued by a parasitic capacitance effect caused by excessive overlap of the gate and the source and drain regions. Before joining Intel in 1970, he’d been working at Fairchild Semiconductor on metal-oxide-semiconductor (MOS) technology, which some believed could prove superior to the incumbent bipolar transistor technology. The Italian was the right man for the job, however. I had nobody working for me to share the workload Intel had never done random-logic custom chips before,” recalls Federico Faggin, who was hired to do the project in 1970. “When I saw the project schedules that were promised to Busicom, my jaw dropped: I had less than six months to design four chips, one of which, the CPU, was at the boundary of what was possible a chip of that complexity had never been done before. The 4004 was extremely challenging to realize and there wasn’t much time to get it done.

#Intel fpga simulation how to

Read more about how to use the Arduino platform in your IoT and IIoT network here. In addition, commercial products with a microprocessor on board had been released, but the 4004 was the first processor to be sold as a component on the general market.Īrduino – communication using the Ethernet networkįor many years, the creation of extensive computer networks has ceased to serve only for connecting computers. It was coined in 1968 by the Massachusetts startup Viatron Computer Systems to describe an 18-chip minicomputer.

The addition of “monolithic” and “commercial” is important here because the term “microprocessor” was already in use by then.

The processing unit in this chipset, the Intel 4004, was destined to become the world’s first monolithic commercial general-purpose microprocessor. This prompted Intel engineer Marcian ‘Ted’ Hoff to come up with a more efficient design that was comprised of only four chips. Intel, however, determined that Busicom’s design was too complex and, crucially, couldn’t be realized using Intel’s standard 16-pin packaging. The Japanese company wanted Intel to design and manufacture these chips. Busicom had come up with a fixed-purpose design requiring twelve ICs, three of which were special-purpose processing units. While the startup waited for the memory business to take off, however, it needed all the business it could get.Īnd so, in 1969, Intel accepted a contract from the Japanese manufacturer Business Computer Corporation to manufacture the chips for its 141-PF calculator.

In October 1970, Intel introduced the 1103 DRAM, which laid the foundation for becoming the world’s largest memory company later in the decade. Instead, their focus was firmly on memories.

Gordon Moore and Bob Noyce didn’t found Intel in 1968 to design microprocessors. Fifty years ago, on 15 November 1971, a prophetic advertisement in Electronic News appeared to announce “a new era of integrated electronics.” The debut of Intel’s 4004 ushered in the revolution of the general-purpose programmable processor, and, by extension, the modern computer age.

- Blog

- New nepali lok geet 2018

- Daz studio genesis 3 female lip biting pose

- Red dead redemption 2 online update

- Dsp 0501 controller

- Julyjailbait porn

- Malena movie 2000 full

- Export realflow to cinema 4d

- F e olds ambassador cornet

- Gmail desktop calendar

- Samsung gear fit manager pc app download

- Arturia spark le manual

- Intel fpga simulation

- Gom player osx

- Juego de the king of fighters 2002 magic plus 2

- Boa vs python temperament